Migrating JSON files into Drupal

Today we will learn how to migrate content from a JSON file into Drupal using the Migrate Plus module. We will show how to configure the migration to read files from the local file system and remote locations. The example includes node, images, and paragraphs migrations. Let’s get started.

Note: Migrate Plus has many more features. For example, it contains source plugins to import from XML files and SOAP endpoints. It provides many useful process plugins for DOM manipulation, string replacement, transliteration, etc. The module also lets you define migration plugins as configurations and create groups to share settings. It offers a custom event to modify the source data before processing begins. In today’s blog post, we are focusing on importing JSON files. Other features will be covered in future entries.

Getting the code

You can get the full code example at https://github.com/dinarcon/ud_migrations The module to enable is `UD JSON source migration` whose machine name is `ud_migrations_json_source`. It comes with four migrations: `udm_json_source_paragraph`, `udm_json_source_image`, `udm_json_source_node_local`, and `udm_json_source_node_remote`.

You can get the Migrate Plus module using composer: `composer require ‘drupal/migrate_plus:^5.0’`. This will install the `8.x-5.x` branch, where new development will happen. This branch was created to introduce breaking changes in preparation for Drupal 9. As of this writing, the `8.x-4.x` branch has feature parity with the newer branch. If your Drupal site is not composer-based, you can download the module manually.

Understanding the example set up

This migration will reuse the same configuration from the introduction to paragraph migrations example. Refer to that article for details on the configuration: the destinations will be the same content type, paragraph type, and fields. The source will be changed in today’s example, as we use it to explain JSON migrations. The end result will again be nodes containing an image and a paragraph with information about someone’s favorite book. The major difference is that we are going to read from JSON. In fact, three of the migrations will read from the same file. The following snippet shows a reduced version of the file to get a sense of its structure:

{

"data": {

"udm_people": [

{

"unique_id": 1,

"name": "Michele Metts",

"photo_file": "P01",

"book_ref": "B10"

},

{...},

{...}

],

"udm_book_paragraph": [

{

"book_id": "B10",

"book_details": {

"title": "The definite guide to Drupal 7",

"author": "Benjamin Melançon et al."

}

},

{...},

{...}

],

"udm_photos": [

{

"photo_id": "P01",

"photo_url": "https://agaric.coop/sites/default/files/pictures/picture-15-1421176712.jpg",

"photo_dimensions": [240, 351]

},

{...},

{...}

]

}

}

Note: You can literally swap migration sources without changing any other part of the migration. This is a powerful feature of ETL frameworks like Drupal’s Migrate API. Although possible, the example includes slight changes to demonstrate various plugin configuration options. Also, some machine names had to be changed to avoid conflicts with other examples in the demo repository.

Migrating nodes from a JSON file

In any migration project, understanding the source is very important. For JSON migrations, there are two major considerations. First, where in the file hierarchy lies the data that you want to import. It can be at the root of the file or several levels deep in the hierarchy. Second, when you get to the array of records that you want to import, what fields are going to be made available to the migration. It is possible that each record contains more data than needed. For improved performance, it is recommended to manually include only the fields that will be required for the migration. The following code snippet shows part of the local JSON file relevant to the node migration:

{

"data": {

"udm_people": [

{

"unique_id": 1,

"name": "Michele Metts",

"photo_file": "P01",

"book_ref": "B10"

},

{...},

{...}

]

}

}

The array of records containing node data lies two levels deep in the hierarchy. Starting with `data` at the root and then descending one level to `udm_people`. Each element of this array is an object with four properties:

- `unique_id` is the unique identifier for each record within the `/data/udm_people` hierarchy.

- `name` is the name of a person. This will be used in the node title.

- `photo_file` is the unique identifier of an image that was created in a separate migration.

- `book_ref` is the unique identifier of a book paragraph that was created in a separate migration.

The following snippet shows the configuration to read a local JSON file for the node migration:

source:

plugin: url

data_fetcher_plugin: file

data_parser_plugin: json

urls:

- modules/custom/ud_migrations/ud_migrations_json_source/sources/udm_data.json

item_selector: /data/udm_people

fields:

- name: src_unique_id

label: 'Unique ID'

selector: unique_id

- name: src_name

label: 'Name'

selector: name

- name: src_photo_file

label: 'Photo ID'

selector: photo_file

- name: src_book_ref

label: 'Book paragraph ID'

selector: book_ref

ids:

src_unique_id:

type: integer

The name of the plugin is `url`. Because we are reading a local file, the `data_fetcher_plugin` is set to `file` and the `data_parser_plugin` to `json`. The `urls` configuration contains an array of file paths relative to the Drupal root. In the example we are reading from one file only, but you can read from multiple files at once. In that case, it is important that they have a homogeneous structure. The settings that follow will apply equally to all the files listed in `urls`.

The `item_selector` configuration indicates where in the JSON file lies the array of records to be migrated. Its value is an XPath-like string used to traverse the file hierarchy. In this case, the value is `/data/udm_people`. Note that you separate each level in the hierarchy with a slash (/).

`fields` has to be set to an array. Each element represents a field that will be made available to the migration. The following options can be set:

- `name` is required. This is how the field is going to be referenced in the migration. The name itself can be arbitrary. If it contains spaces, you need to put double quotation marks (“) around it when referring to it in the migration.

- `label` is optional. This is a description used when presenting details about the migration. For example, in the user interface provided by the Migrate Tools module. When defined, you do not use the label to refer to the field. Keep using the name.

- `selector` is required. This is another XPath-like string to find the field to import. The value must be relative to the location specified by the `item_selector` configuration. In the example, the fields are direct children of the records to migrate. Therefore, only the property name is specified (e.g., `unique_id`). If you had nested objects or arrays, you would use a slash (/) character to go deeper in the hierarchy. This will be demonstrated in the image and paragraph migrations.

Finally, you specify an `ids` array of field names that would uniquely identify each record. As already stated, the `src_unique_id` field serves that purpose. The following snippet shows part of the process, destination, and dependencies configuration of the node migration:

process:

field_ud_image/target_id:

plugin: migration_lookup

migration: udm_json_source_image

source: src_photo_file

destination:

plugin: 'entity:node'

default_bundle: ud_paragraphs

migration_dependencies:

required:

- udm_json_source_image

- udm_json_source_paragraph

optional: []

The `source` for the setting the image reference is `src_photo_file`. Again, this is the `name` of the field, not the `label` nor `selector`. The configuration of the migration lookup plugin and dependencies point to two JSON migrations that come with this example. One is for migrating images and the other for migrating paragraphs.

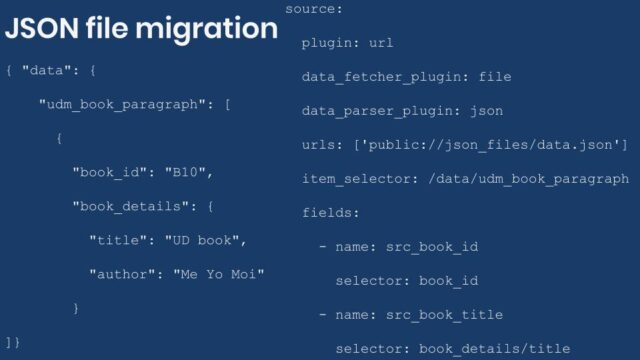

Migrating paragraphs from a JSON file

Let’s consider an example where the records to migrate have many levels of nesting. The following snippets show part of the local JSON file and source plugin configuration for the paragraph migration:

{

"data": {

"udm_book_paragraph": [

{

"book_id": "B10",

"book_details": {

"title": "The definite guide to Drupal 7",

"author": "Benjamin Melançon et al."

}

},

{...},

{...}

]

}

source:

plugin: url

data_fetcher_plugin: file

data_parser_plugin: json

urls:

- modules/custom/ud_migrations/ud_migrations_json_source/sources/udm_data.json

item_selector: /data/udm_book_paragraph

fields:

- name: src_book_id

label: 'Book ID'

selector: book_id

- name: src_book_title

label: 'Title'

selector: book_details/title

- name: src_book_author

label: 'Author'

selector: book_details/author

ids:

src_book_id:

type: string

The `plugin`, `data_fetcher_plugin`, `data_parser_plugin` and `urls` configurations have the same values as in the node migration. The `item_selector` and `ids` configurations are slightly different to represent the path to paragraph records and the unique identifier field, respectively.

The interesting part is the value of the `fields` configuration. Taking `/data/udm_book_paragraph` as a starting point, the records with paragraph data have a nested structure. Particularly, `book_details` is an object with two properties: `title` and `author`. To refer to them, the selectors are `book_details/title` and `book_details/author`, respectively. Note that you can go as many level deeps in the hierarchy to find the value that should be assigned to the field. Every level in the hierarchy would be separated by a slash (/).

In this example, the target is a single paragraph type. But a similar technique can be used to migrate multiple types. One way to configure the JSON file is having two properties. `paragraph_id` would contain the unique identifier for the record. `paragraph_data` would be an object with a property to set the paragraph type. This would also have an arbitrary number of extra properties with the data to be migrated. In the process section, you would iterate over the records to map the paragraph fields.

The following snippet shows part of the process configuration of the paragraph migration:

process: field_ud_book_paragraph_title: src_book_title field_ud_book_paragraph_author: src_book_author

Migrating images from a JSON file

Let’s consider an example where the records to migrate have more data than needed. The following snippets show part of the local JSON file and source plugin configuration for the image migration:

{

"data": {

"udm_photos": [

{

"photo_id": "P01",

"photo_url": "https://agaric.coop/sites/default/files/pictures/picture-15-1421176712.jpg",

"photo_dimensions": [240, 351]

},

{...},

{...}

]

}

}

source:

plugin: url

data_fetcher_plugin: file

data_parser_plugin: json

urls:

- modules/custom/ud_migrations/ud_migrations_json_source/sources/udm_data.json

item_selector: /data/udm_photos

fields:

- name: src_photo_id

label: 'Photo ID'

selector: photo_id

- name: src_photo_url

label: 'Photo URL'

selector: photo_url

ids:

src_photo_id:

type: string

The `plugin`, `data_fetcher_plugin`, `data_parser_plugin` and `urls` configurations have the same values as in the node migration. The `item_selector` and `ids` configurations are slightly different to represent the path to image records and the unique identifier field, respectively.

The interesting part is the value of the `fields` configuration. Taking `/data/udm_photos` as a starting point, the records with image data have extra properties that are not used in the migration. Particularly, the `photo_dimensions` property contains an array with two values representing the width and height of the image, respectively. To ignore this property, you simply omit it from the `fields` configuration. In case you wanted to use it, the selectors would be `photo_dimensions/0` for the width and `photo_dimensions/1` for the height. Note that you use a zero-based numerical index to get the values out of arrays. Like with objects, a slash (/) is used to separate each level in the hierarchy. You can go as far as necessary in the hierarchy.

The following snippet shows part of the process configuration of the image migration:

process:

psf_destination_filename:

plugin: callback

callable: basename

source: src_photo_url

JSON file location

Important: What is described in this section only applies when you use the `file` data fetcher plugin.

When using the `file` data fetcher plugin, you have three options to indicate the location to the JSON files in the `urls` configuration:

- Use a relative path from the Drupal root. The path should not start with a slash (/). This is the approach used in this demo. For example, `modules/custom/my_module/json_files/example.json`.

- Use an absolute path pointing to the JSON location in the file system. The path should start with a slash (/). For example, `/var/www/drupal/modules/custom/my_module/json_files/example.json`.

- Use a fully-qualified URL to any built-in wrapper like `http`, `https`, `ftp`, `ftps`, etc. For example, `https://understanddrupal.com/json-files/example.json`.

- Use a custom stream wrapper.

Being able to use stream wrappers gives you many more options. For instance:

- Files located in the public, private, and temporary file systems managed by Drupal. This leveragers functionality already available in Drupal core. For example: `public://json_files/example.json`.

- Files located in profiles, modules, and themes. You can use the System stream wrapper module or apply this core patch to get this functionality. For example, `module://my_module/json_files/example.json`.

- Files located in AWS Amazon S3. You can use the S3 File System module along with the S3FS File Proxy to S3 module to get this functionality.

Migrating remote JSON files

Important: What is described in this section only applies when you use the `http` data fetcher plugin.

Migrare Plus provides another data fetcher plugin named `http`. Under the hood, it uses the Guzzle HTTP Client library. You can use it to fetch files using any protocol supported by `curl` like `http`, `https`, `ftp`, `ftps`, `sftp`, etc. In a future blog post we will explain this data fetcher in more detail. For now, the `udm_json_source_node_remote` migration demonstrates a basic setup for this plugin. Note that only the `data_fetcher_plugin` and `urls` configurations are different from the local file example. The following snippet shows part of the configuration to read a remote JSON file for the node migration:

source:

plugin: url

data_fetcher_plugin: http

data_parser_plugin: json

urls:

- https://udrupal.com/files/udm_remote.json

item_selector: /data/udm_people

fields: ...

ids: ...

And that is how you can use JSON files as the source of your migrations. Many more configurations are possible. For example, you can provide authentication information to get access to protected resources. You can also set custom HTTP headers. Examples will be presented in a future entry.

What did you learn in today’s blog post? Have you migrated from JSON files before? If so, what challenges have you found? Did you know that you can read local and remote files? Please share your answers in the comments. Also, I would be grateful if you shared this blog post with others.